2026-02-23

Introducing Rubbr

I'm launching Rubbr, a controlled AI-driven software delivery platform for legacy systems

By Daniel Jarjoura

AI agents are becoming very good at writing code. But writing code was never the hard part.

The hard part is understanding the business, functional and architectural context of existing systems well enough to deliver high-quality features safely and consistently. In most established organisations, that context does not exist in a clean, structured form. It lives in fragmented legacy code, incomplete documentation, undocumented trade-offs and in the heads of a few individuals who know how the system behaves.

For years, this fragility created bottlenecks and dependencies on key individuals. Now we are introducing highly capable AI coding agents into that environment, and we’re surprised that the result is chaos.

That is not an AI failure. It is a context failure.

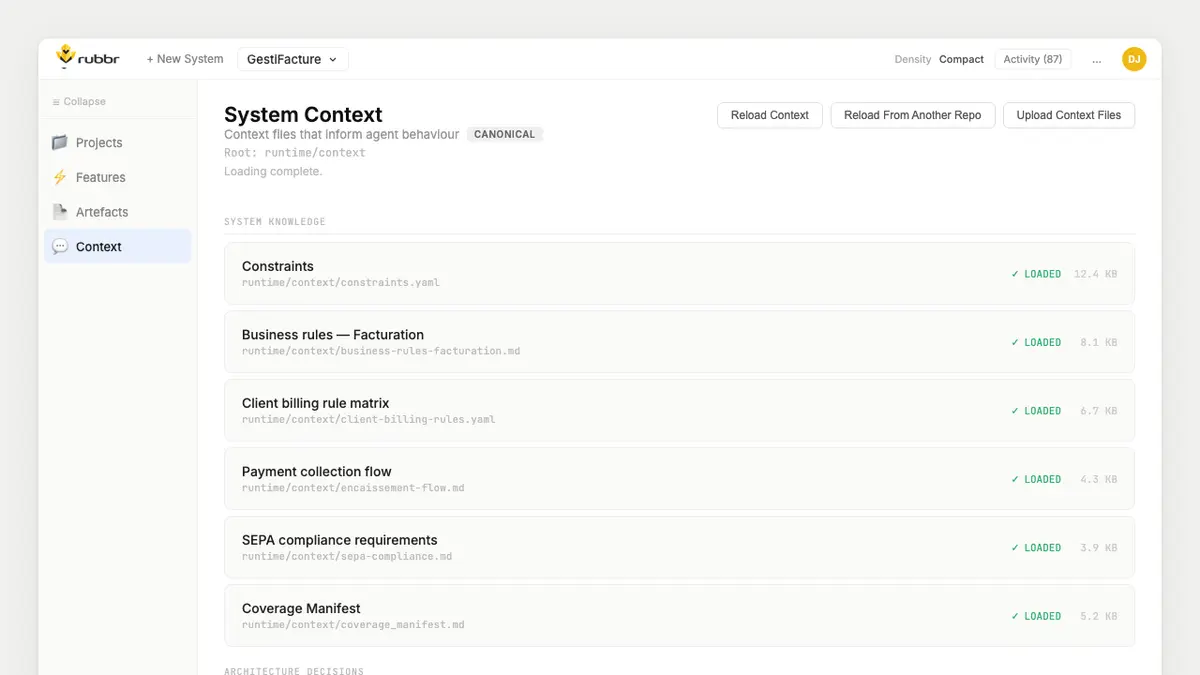

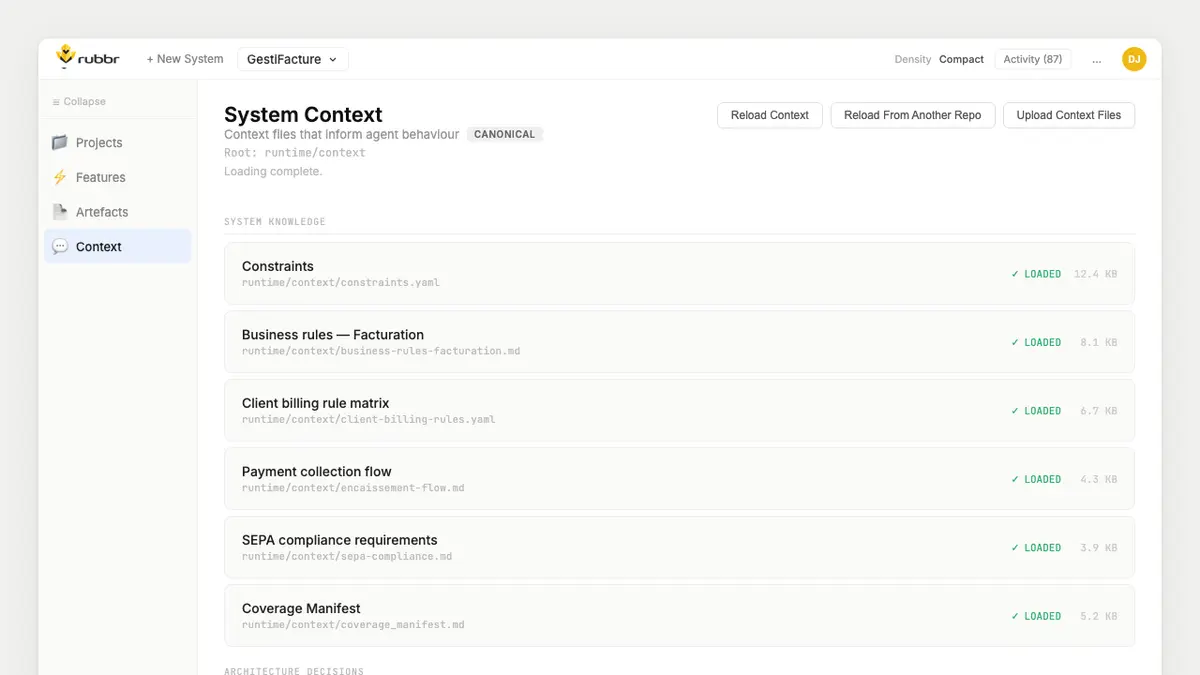

Over the past months, I have been building something to address exactly this problem: Rubbr, a controlled AI-driven software delivery platform for legacy systems.

Rubbr is LLM-neutral by design. It can operate with its own specialised AI agents and orchestrate existing agents within your stack. It does not replace them. It structures and constrains them.

Rubbr introduces a persistent representation of system context. Business rules, architectural constraints, ownership boundaries and historical decisions are made explicit and auditable.

Nothing executes without being explained, validated against system constraints, reviewed and owned. Changes are traceable across time, assumptions are surfaced and humans remain accountable. We call this context engineering.

The prerequisite for safe AI-driven delivery is not better prompting. It is transforming implicit system knowledge into structured context that agents can reason against before they act.

AI agents amplify whatever structure they are given. If the context is incomplete, they amplify ambiguity. If the context is explicit and coherent, they amplify clarity. Before AI changes a system, the system must be able to explain itself.

If an AI-generated change were deployed to production today, could your organisation explain why it exists, which constraints it respected and who approved it six months from now? If not, the limiting factor is not AI capability. It is context.

If you are responsible for a system that cannot afford uncontrolled change, and would like to capture the full value of AI without sacrificing coherence or accountability, I would welcome a conversation.